Product Management for Voice AI Workflows

Image generated by Google Gemini

Picture navigating a frustrating issue with your bank. You call the customer service line, desperately attempting to explain a fraudulent charge before your flight takes off. The voice on the other end greets you, asks for details, and when you finish explaining, there is silence. One agonizing second passes. Then two. By the third second, your heart rate elevates and your patience snaps. "Hello? Are you even there?" you ask, right as the agent finally begins to speak, causing the system to stutter and fail.

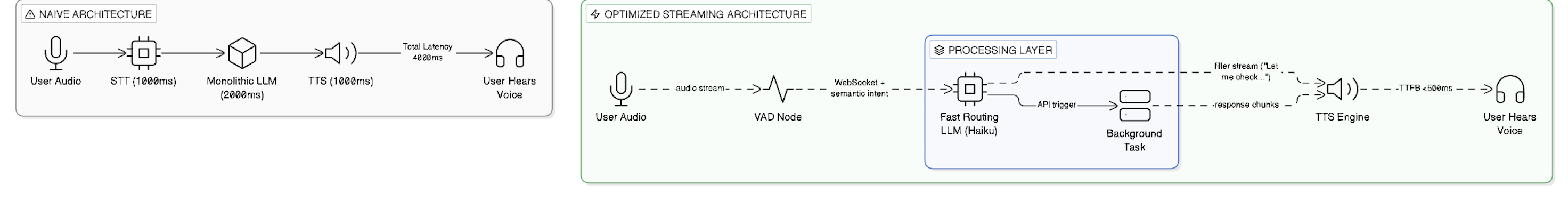

In a traditional text-based ChatGPT window, a three-second delay is perfectly normal. Users perceive the blinking cursor as the machine "thinking," which intuitively aligns with the magic of reading a generated response. But transferring this textual delay into a live phone call shatters the illusion entirely. Driven by the rush to commercialize generative AI, many companies are simply stapling a Speech-to-Text (STT) transcriber onto the front of a monolithic text LLM, and bolting a Text-to-Speech (TTS) synthesizer onto the back. They treat voice interfaces as just another frontend for text processing, ignoring the deeply ingrained psychological rules of human conversation.

Voice AI fundamentally alters the product equation; because human trust breaks instantly if true conversational flow is interrupted, product teams must sacrifice heavy computational reasoning at the uncompromising altar of zero-latency streaming.

The psychological toll of latency in audio environments is profound. Human conversational turn-taking is not a series of isolated data transmissions; it is a rapid, subconscious social ballet. Linguistic research demonstrates that the typical gap between turns in human speech is roughly 200 milliseconds (Levinson). When an automated agent takes 1,500 milliseconds—an eternity in audio time—to formulate a response, the human brain instinctively registers the interaction not as artificial intelligence, but as incompetence or technical failure. This visceral frustration leads directly to call abandonment, destroying the business value of the AI deployment before the agent even manages to offer a solution. Users tolerate mistakes from human operators, but they harbor almost zero tolerance for machines that make them feel ignored.

When I led the 0-to-1 building of Revlyq, an enterprise Voice AI platform for lead capture, we quickly realized that standard text-first product requirements were irrelevant. A product manager cannot simply ask an LLM to follow a heavy, 2,000-word system prompt to evaluate 15 different customer variables before opening its digital mouth. Instead, building a real voice product demands an obsession with Time-to-First-Byte (TTFB) architecture. You have to design the backend to stream audio chunks immediately from the LLM provider, often trading nuance for sheer speed. Even more critically, the product must master "barge-in"—the ability for the human user to interrupt the machine seamlessly. If the Voice Activity Detection (VAD) cannot distinguish between a user taking an audible breath and an actual verbal interruption, the user feels trapped in a terrifying, unbreakable corporate voicemail maze.

The engineering and economic data surrounding voice AI heavily support this pivot toward speed over deep reasoning. Traditional monolithic LLMs process conversational history in blocks, mathematically ensuring that the longer a conversation goes on, the slower the response time becomes. By decoupling the architecture, using light, fast models (like Claude Haiku or specialized open-source iterations) strictly for conversational routing, and offloading heavy lifting to asynchronous background tasks, platforms can achieve sub-600ms latency. This directly correlates with a reduction in user frustration signals, resulting in higher task completion rates and actual realized business value.

Moving from text to voice is not a simple UI update. It requires a product leader to step outside the comfortable paradigm of visual software and directly engage with the fragile psychology of human speech. The teams that ultimately win the Voice AI market will not be those boasting the models with the highest reasoning scores; they will be the teams that understand how to make their machines listen, pause, and respond like humans.

Works Cited Levinson, Stephen C. “Turn-taking in Human Communication – Origins and Implications for Language Processing.” Trends in Cognitive Sciences, vol. 20, no. 1, 2016, pp. 6-14.